Apple has officially scrapped plans to scan images to detect known Child Sexual Abuse Material (CSAM) stored in iCloud Photos, which would have allowed Apple to report detected material to law enforcement agencies, with the iPhone maker saying on Tuesday […]

- Advertisement -

Home » Child Safety

Child Safety

0

Apple has released iOS 15.5 today, bringing with it the iPhone maker’s child Communication Safety in Messages tool to users in Australia, Canada, New Zealand and the UK, marking the first international expansion of the tool that warns children and their parents […]

It was reported on Wednesday that Apple is preparing to bring its child Communication Safety in Messages feature to the UK, marking the first international expansion of the tool that warns children and their parents when a child receives or […]

Apple is preparing to bring its child Communication Safety in Messages feature to the UK, marking the first international expansion of the tool that warns children and their parents when a child receives or sends sexually explicit photos through iMessage. […]

Apple has released iOS 15.2, bringing App Privacy Reports in Settings to see how often apps have accessed location, photos, camera, microphone, and contacts, a new Macro photo control for the iPhone 13 Pro, the new Apple Music Voice Plan, […]

Apple has released iOS 15.2 and iPadOS 15.2 for iPhone and iPad, bringing Communication Safety in Messages, a new Macro photo control for the iPhone 13 Pro, App Privacy Reports in Settings to see how often apps have accessed location, […]

Apple has released the second developer beta of its upcoming iOS 15.2 software update, confirming that its new Communication Safety in Messages feature will be enabled upon release, which includes tools to warn children and their parents when a child receives […]

Apple announced several new child safety features last week, including iMessage warnings that alert children and parents when a sexually explicit image is sent or received on a device connected to iCloud Family Sharing, automatic blurring of explicit images sent […]

Apple has announced several new child safety features coming to iPhone, iPad, and Mac later this year, including iMessage warnings that alert children and parents when a sexually explicit image is sent or received on a device connected to iCloud […]

1

- - Advertisement -

Popular Posts

- - Advertisement -

The Apple Post publishes the latest Apple news, iPhone leaks, Mac rumors and in-depth HomeKit guides, sharing coverage and analysis on all things Apple.

Read the day’s latest stories and receive breaking news alerts with theapplepost.com app – available on the App Store.

Featured

- Apple reveals Eye Tracking coming to iPhone and iPad...15/05/2024

- Apple Vision Pro to launch in countries outside the US following...13/05/2024

- Apple releases iOS 17.5 with Cross-Platform Tracking...13/05/2024

- Apple finalizing agreement with OpenAI to use AI technology...11/05/2024

- iPhone 16 Pro rumored to feature new display with 20% higher...11/05/2024

- Apple reveals Eye Tracking coming to iPhone and iPad...

How-To

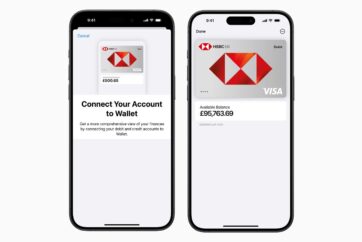

- How to see your bank balance in the Wallet app on iPhone25/10/2023

- How to send a voice message in the Messages app on iPhone09/06/2023

- How to send message effects on iPhone31/12/2022

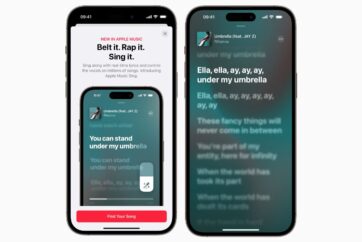

- How to use Apple Music Sing on iPhone31/12/2022

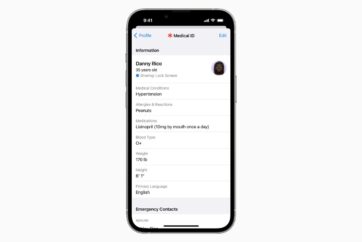

- How to create a Medical ID on iPhone28/12/2022

- How to see your bank balance in the Wallet app on iPhone