Apple responds to privacy concerns over new CSAM photo scanning feature

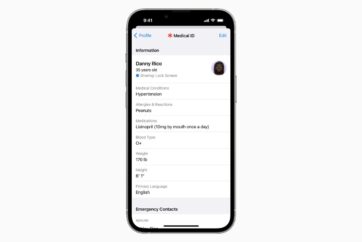

Apple announced several new child safety features last week, including iMessage warnings that alert children and parents when a sexually explicit image is sent or received on a device connected to iCloud Family Sharing, automatic blurring of explicit images sent in Messages, Child Sexual Abuse Material, CSAM detection when explicit images are detected in a user’s iCloud Photo library, and more.

Following an open letter to Apple that called for development of the new features to be halted over user privacy concerns, Apple has now published a new document with FAQs in an effort to provide clarity and transparency on the “expanded protections for children.”

“We want to protect children from predators who use communication tools to recruit and exploit them, and limit the spread of Child Sexual Abuse Material (CSAM). Since we announced these features, many stakeholders including privacy organizations and child safety organizations have expressed their support of this new solution,” the document reads, adding that the company has also received questions on the new features, which it aims to answer with this new document.

The document emphasizes how communication safety in Messages is only available for accounts set up as families in iCloud and that CSAM photo scanning only applies to photos that the user chooses to upload to iCloud Photos and does not work for users who have iCloud Photos disabled.

See Apple’s full Expanded Protections for Children document here.

Addressing concerns over governments forcing Apple to add non-CSAM images to the detection list, the company says it would refuse any such demands, noting that the CSAM detection capability is built solely to detect known CSAM images stored in iCloud Photos that have been identified by experts at NCMEC and other child safety groups.