Apple announces new child safety features, including photo scanning, iMessage warnings, more

Apple has announced several new child safety features coming to iPhone, iPad, and Mac later this year, including iMessage warnings that alert children and parents when a sexually explicit image is sent or received on a device connected to iCloud Family Sharing, automatic blurring of explicit images sent in Messages, Child Sexual Abuse Material, CSAM detection when explicit images are detected in a user’s library, and more.

The new features, set to be launched first in the United States, will be expanded to other regions in the future, with Apple saying they will “evolve and expand over time,” giving users the ability to report CSAM through Siri and Search.

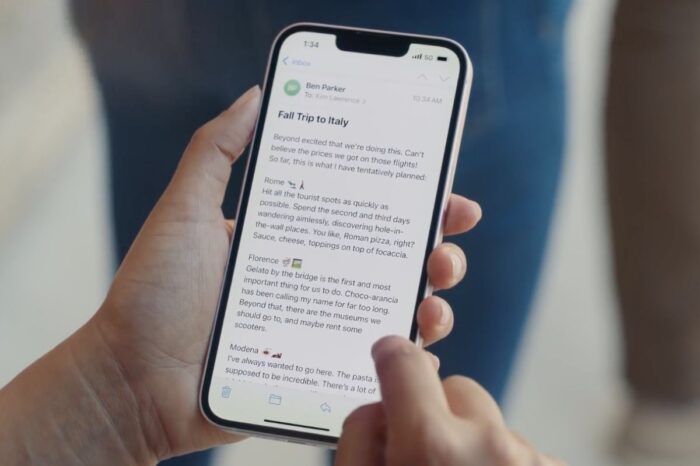

When a child receives an explicit image, Apple says Messages will show a warning saying the image “may be sensitive.” If the child taps “View photo,” they’ll see a pop-up message that informs them why the image is considered sensitive. Depending on the age of the recipient, parents in an iCloud Family Sharing group will have the option to receive a notification if their child proceeds to view the sensitive photo or if they choose to send a sexually explicit photo to another contact after being warned.

Image: Apple

As part of its new child safety initiative, Apple has announced that beginning with iOS 15, the company will be able to detect known child pornography and related sexual abuse material stored in iCloud Photos, which will flag up and be reported to the National Center for Missing and Exploited Children (NCMEC), a non-profit organization that works in collaboration with U.S. law enforcement agencies.

Apple says before an image is stored in iCloud Photos, the picture will go through an on-device matching process against a set of known CSAM hashes.

Apple’s method of detecting known CSAM is designed with user privacy in mind. Instead of scanning images in the cloud, the system performs on-device matching using a database of known CSAM image hashes provided by NCMEC and other child safety organizations. Apple further transforms this database into an unreadable set of hashes that is securely stored on users’ devices.

Apple touts the system is very accurate, with an error rate of less than one in one trillion accounts per year.

Before an image is stored in iCloud Photos, an on-device matching process is performed for that image against the known CSAM hashes. This matching process is powered by a cryptographic technology called private set intersection, which determines if there is a match without revealing the result. The device creates a cryptographic safety voucher that encodes the match result along with additional encrypted data about the image. This voucher is uploaded to iCloud Photos along with the image.

In an update to its Child Safety webpage, Apple says using another technology called threshold secret sharing, the system ensures the contents of the safety vouchers cannot be interpreted by Apple unless the iCloud Photos account crosses a threshold of known CSAM content.

Only when the threshold is exceeded does the cryptographic technology allow Apple to interpret the contents of the safety vouchers associated with the matching CSAM images. Apple then manually reviews each report to confirm there is a match, disables the user’s account, and sends a report to NCMEC. If a user feels their account has been mistakenly flagged they can file an appeal to have their account reinstated.